I’m using the ACS714 current sensor and Arduino Mega2560 to do power measurements and also for RC servo control. It’s working, but accuracy of current measurement is not good. The more current that flows through the sensor, the worse the current measurement gets. I use a Wattmeter as benchmark, and confirmed measurements with a multi-meter where possible.

I basically followed this how2:

and also from this forum post:

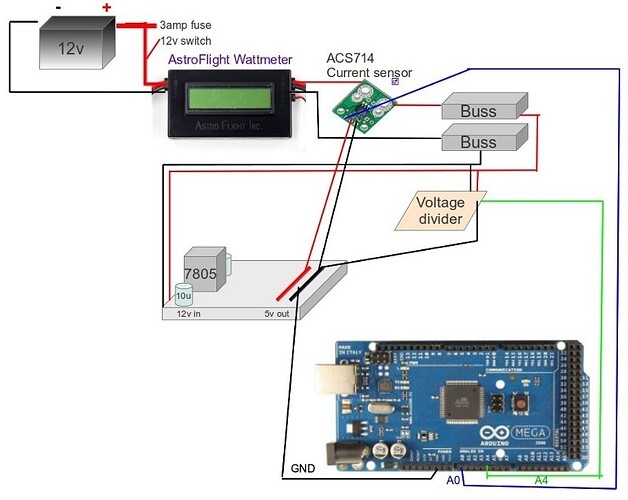

Here is an ugly diagram. Hope it is understandable, anyway.

The voltage divider is same as the how2. R1=12k ohms, and R2=5k ohms. The breadboard voltage regulator is based on building a Mintduino, using a 7805 power regulator and 10uF capacitors. It takes in 12v and outputs ~4.94v. I use it as a low voltage power buss, supplying the ACS714, and some RC servos.

To get voltage measurement correct, I edited this line;

pinVoltage = avgBVal * 0.00705;

To get the amps measurement “close”, I edited this line;

outputValue = ((((long)avgSAV * 5000 / 1024) - 2525 ) * 1000 / 66) * -1;

Could accuracy with amps measurement be related to the voltage regulator? Either not supplying full 5v to the current sensor, or being too choppy or noisy? I’m not sure how to proceed with troubleshooting this… I’m very new to electronics, many things I don’t understand. But still learning slowly, any guidance is most appreciated. Thanks.